The traditional philosophy of securing a network by building a high, impenetrable wall around it has become a strategic liability for organizations that must share data across cloud platforms and international supply chains. In the current technological landscape, government departments and critical national infrastructure providers find themselves in a difficult position where they must balance extreme security with the need for rapid digital innovation. To address this, the UK’s National Cyber Security Centre (NCSC) has stepped in to replace outdated, hardware-centric models with a dynamic, pipeline-based architecture. This evolution marks a fundamental departure from treating security as a static barrier and instead views it as an inherent, ongoing characteristic of the data’s journey from one network to another.

The importance of this transition cannot be overstated because modern data movement is no longer a simple transaction between two trusted machines located in the same building. Information now travels through a complex web of application programming interfaces (APIs), third-party services, and hybrid cloud environments where a single vulnerability in a communication protocol can compromise an entire national database. By redefining cross-domain security, the NCSC provides a strategic roadmap for maintaining resilience in an era where the boundary between “inside” and “outside” has largely vanished. This framework is not merely a suggestion for better hygiene but a necessary structural change required to protect the digital backbone of modern society.

Moving Beyond the Digital Gatekeeper: A New Era for Data Flow

For decades, the standard approach to cross-domain security relied on the installation of a monolithic hardware appliance, often referred to as a digital gatekeeper. These devices were designed to act as a singular point of control, either permitting or blocking data based on a rigid set of pre-defined rules. While this method offered a sense of absolute control, it has increasingly become a bottleneck for organizations that require real-time data synchronization and high-speed cloud interactions. The rigid nature of these legacy systems meant that any change in business requirements necessitated a cumbersome and expensive reconfiguration of hardware, often slowing down essential public services and industrial operations.

The shift away from these “point solutions” is driven by the recognition that a single device can no longer account for the myriad ways data is manipulated and transformed during its lifecycle. The NCSC argues that the old “hard shell” approach creates a false sense of security, where the internal network is assumed to be safe once the perimeter is cleared. In reality, modern threats often bypass these checkpoints by hitchhiking on legitimate data streams or exploiting the very protocols that the gatekeeper is designed to monitor. Consequently, the focus is moving toward a more holistic view of data flow, where security is distributed across multiple stages rather than being concentrated in one vulnerable piece of equipment.

By embracing a more fluid architectural model, organizations can finally decouple their security functions from their physical infrastructure. This flexibility allows for the integration of modern software-defined networking and cloud-native tools that can scale alongside the data they protect. This new era is defined by the ability to inspect, transform, and validate data at various points in its journey, ensuring that no single failure can lead to a catastrophic breach. It represents a transition from a mindset of total exclusion to one of managed, high-assurance inclusion, where data flows are enabled by design rather than restricted by default.

Why Legacy Security Models Can No Longer Keep Pace

The urgency behind the NCSC’s new framework stems from a fundamental shift in how modern organizations operate on a daily basis. The days when vital information sat safely in a single, disconnected room are long gone; today, records, sensor data, and communication logs must move constantly across differing “zones of trust.” Whether it is a utility company importing real-time diagnostics from field sensors or a government department syncing sensitive citizen records with an external cloud service, the risk profile has fundamentally changed. Legacy systems were built for a binary environment where a network was either “high side” or “low side,” but this distinction is increasingly irrelevant in a hyper-connected world.

Furthermore, the nature of modern threats has become far more nuanced than the simple malware or viruses that legacy gatekeepers were designed to catch. State-sponsored actors and sophisticated criminal syndicates now utilize advanced tools to exploit protocol-level weaknesses that traditional hardware often misses. These attackers do not always try to break down the door; instead, they manipulate the data format or exploit the logic of the receiving application. The NCSC emphasizes that security must be an inherent characteristic of the data’s entire journey because a checkpoint at the border is useless if the attacker has already found a way to masquerade as a legitimate user or an authorized process.

Moreover, the complexity of global supply chains means that organizations often have to trust data coming from sources they do not fully control. A legacy model that treats all external data as a threat to be blocked would paralyze modern operations, while a model that trusts everything once it passes a basic scan is dangerously naive. The new guidance acknowledges this reality by moving toward a model where trust is relative and must be continuously earned. This requires a sophisticated understanding of how data is structured and how it interacts with the destination system, moving security deeper into the application layer where it can provide more meaningful protection against modern exploits.

From Monolithic Appliances to Flexible Security Pipelines

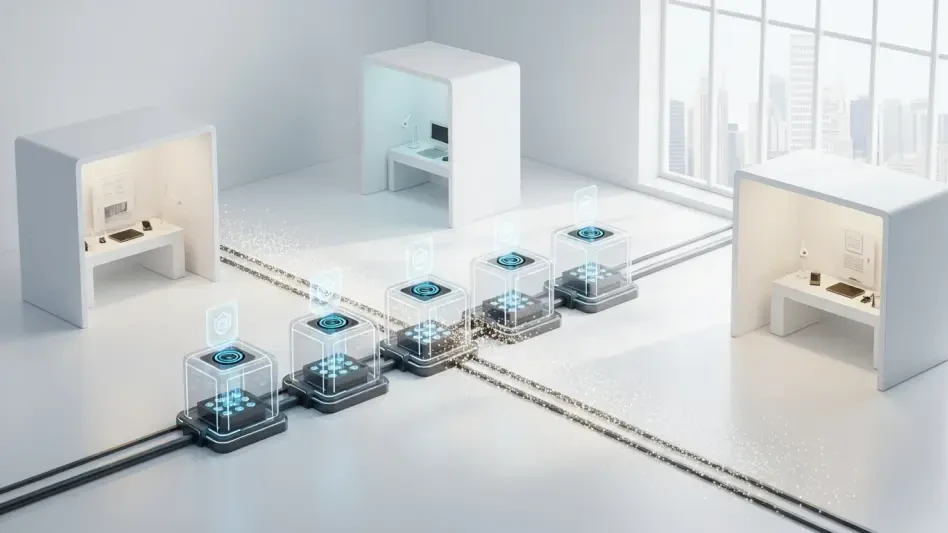

The NCSC’s transformative guidance centers on the innovative concept of a “security pipeline,” which is a sequence of specific functions designed to progressively build confidence in data as it moves between systems. Instead of asking one piece of hardware to perform every security task, this approach breaks the process down into “logical control points.” These points handle specific, isolated tasks such as protocol validation, transport termination, and content inspection. By separating the security function from the physical tool, organizations gain the immense flexibility to use a mix of hardware and software components that are specifically tailored to their unique risks and operational needs.

Within this pipeline architecture, the model introduces the concept of “zones of trust,” which acknowledges that data entering a network (import) requires entirely different safeguards than data leaving a network (export). For instance, an import pipeline might focus heavily on stripping away potentially malicious active content from a document, whereas an export pipeline would prioritize the prevention of unauthorized data leakage through strict content filtering and authorization checks. This directional approach ensures that security resources are focused where they are most effective, rather than being spread thin across a generic, one-size-fits-all boundary.

This model also introduces “security control points,” which are high-assurance components specifically hardened to resist compromise from malicious data. These points act as the backbone of the pipeline, providing a reliable foundation even when other parts of the system are under heavy pressure. By distributing these controls, an organization ensures that even if one element—such as a data transformer or an inspection tool—is breached, the malicious activity is contained. This design prevents an attacker from pivoting deeper into a secure zone, thereby ensuring that the core of the network remains protected even during an active incident.

Navigating a Severe and AI-Enhanced Threat Landscape

The NCSC warns that the shift to this new framework is not merely a technical preference but a dire necessity driven by an increasingly hostile digital environment. Adversaries are now utilizing artificial intelligence to execute precision strikes against targets of national significance with unprecedented speed and efficiency. In this context, the NCSC emphasizes that no single component, regardless of its certification or cost, should ever be considered invulnerable. Their research and field observations suggest that even the most high-assurance security control points must be supported by an architecture that assumes eventual compromise is a statistical certainty.

This “defense-in-depth” philosophy is the only viable way to survive in a landscape where AI can be used to discover zero-day vulnerabilities in a matter of seconds. In a pipeline architecture, the compromise of a single component does not mean the end of the mission; rather, it is a managed event where the system’s design prevents the attacker from escalating their privileges or accessing unauthorized data. This level of resilience is essential for critical infrastructure providers who cannot afford even an hour of downtime, as the cost of a successful breach could range from massive financial loss to the disruption of essential life-saving services.

Furthermore, the NCSC highlights that the threat is no longer limited to the data itself but extends to the metadata and the protocols used for transmission. Attackers are increasingly targeting the management interfaces and the underlying logic of cross-domain solutions. By adopting a pipeline model, organizations can isolate these management functions, ensuring that even if the data processing layer is under attack, the security team retains visibility and control over the flow. This focus on observability is a key pillar of modern defense, allowing teams to detect the subtle anomalies that often precede a major AI-driven offensive.

Applying the NCSC’s Six Core Design Principles

To transition from legacy systems to a modern cross-domain architecture, organizations must adopt a framework built on six fundamental pillars that guide every design decision. First, architects should prioritize the minimization of data transfer, ensuring that only the absolute essential information crosses a trust boundary. By choosing simple, well-defined protocols and stripping away unnecessary metadata, the attack surface is reduced before the data even reaches the first checkpoint. Second, trust must be established across the entire stack, meaning that security teams must treat all incoming data as untrusted from the network layer all the way up to the application context.

The third principle focuses on strict export controls, ensuring that only understood and expected data leaves the secure zone. This prevents sensitive information from being exfiltrated by hidden malware or negligent insiders. Fourth, the system must be designed to minimize the impact of a compromised component through rigorous compartmentalization. If a specific data transformer fails or is taken over, it should not have the permissions or the connectivity to affect any other part of the pipeline. This containment strategy is what allows a modern network to remain functional even while under a localized attack.

Finally, the remaining two principles focus on the human and operational side of security. Key security controls should be kept as simple as possible to reduce the likelihood of configuration errors and to make the system easier to audit. A complex security system is often an insecure one, as hidden dependencies can create unforeseen vulnerabilities. Lastly, the entire architecture must prioritize observability and manageability. Security teams need the ability to monitor data flows in real-time, record every operation, and respond to anomalies with surgical precision. This level of insight ensures that the architecture remains effective as the threat landscape continues to evolve.

The NCSC’s initiative successfully redirected the focus of national security from static defenses toward a model of continuous assurance and structural resilience. By the time organizations began fully integrating these pipeline-based architectures, the benefits became clear through a marked reduction in the lateral movement of threats within government networks. The transition proved that security and innovation were not mutually exclusive but were instead two sides of the same coin in a digital-first economy. This shift ensured that the integrity of data was maintained even as it passed through the most hostile environments.

Looking forward, the widespread adoption of these six core principles allowed for a more standardized approach to cross-domain interactions, which simplified the secure integration of emerging technologies like edge computing and decentralized identity systems. This foundational work by the NCSC served as a global benchmark for how to protect modern infrastructure without sacrificing the speed and connectivity required for the future. Organizations that followed this path eventually discovered that their security posture was no longer a hurdle but a competitive advantage that enabled them to collaborate more freely and securely on a global stage.