The rapid proliferation of artificial intelligence meeting assistants has introduced a silent yet pervasive vulnerability into the modern enterprise environment, fundamentally altering the nature of the insider threat. While corporate security teams have historically focused on disgruntled employees or external hackers, the most significant contemporary risk often arrives in the form of a helpful productivity tool offered through a “free trial.” These AI-powered assistants, including popular integrations like Otter, Fireflies, and various proprietary copilots, are quietly embedding themselves into the most sensitive layers of business operations. By joining virtual calls on platforms such as Zoom, Microsoft Teams, and Google Meet, these tools operate as a persistent third-party surveillance layer. They capture, process, and store sensitive intellectual property outside the established secure perimeter of the organization, often without explicit authorization or even the full awareness of the participants involved. This trend represents a paradoxical shift where the pursuit of extreme efficiency has inadvertently created a massive gateway for data exfiltration and unauthorized surveillance.

The Productivity Paradox: Efficiency at the Expense of Security

The widespread adoption of AI meeting assistants is fueled by what experts call a productivity illusion, where the immediate benefits of automated documentation overshadow long-term security risks. In the current distributed work environment, the administrative friction associated with manual notetaking is viewed as a significant inefficiency that AI can seamlessly resolve. These tools provide high-fidelity transcriptions, extract action items, and generate concise summaries, allowing employees to focus entirely on the conversation. However, this drive for speed has significantly outpaced the appetite for security scrutiny. In the current deployment model, convenience and data safety are often treated as mutually exclusive priorities. Organizations frequently allow these bots to enter meeting rooms without a formal vetting process, prioritizing the individual employee’s workflow over the collective security of the enterprise’s intellectual property and strategic communications.

Unlike a human colleague who understands the nuances of corporate confidentiality, an AI notetaker lacks the contextual awareness to distinguish between a casual introduction and a highly sensitive trade secret. These bots record everything with equal diligence, from valuation discussions and undisclosed clinical trial results to board-level maneuvers and legal strategies. This lack of discernment creates a digital “Pandora’s box” of cybersecurity and legal challenges. Once the data is captured, it is frequently funneled into third-party large language models for processing and refinement. The data-handling practices of these third-party vendors are often opaque and rarely subject to the rigorous enterprise audits required for other business-critical software. Consequently, sensitive information that was once contained within a private boardroom is now living on external servers, governed by terms of service that favor the service provider rather than the enterprise.

Technical Vulnerabilities: OAuth and the Expanded Attack Surface

A critical but frequently underappreciated risk associated with AI notetakers is the massive expansion of the enterprise attack surface through broad OAuth permissions. To function effectively, these tools require extensive access to a user’s calendar, email, and meeting platform. These permission grants are not merely localized to a single application; they create persistent pathways into the broader enterprise identity infrastructure. A single compromised token or a vulnerability within the third-party provider’s system can provide a malicious actor with a direct route to sensitive corporate data. This architectural weakness mimics high-profile security failures seen in other cloud-based integrations, where the interconnectedness of modern software becomes its primary point of failure. Security teams are finding it increasingly difficult to manage these decentralized entry points, as employees frequently grant these permissions without consulting the IT department.

Beyond the initial access risks, AI notetakers create a persistent third-party memory that exists entirely outside of internal corporate controls. These tools do more than just listen; they sync and store data to external cloud environments to facilitate cross-platform accessibility and future AI training. This results in a permanent, searchable record of confidential conversations that a company cannot easily delete or secure. Furthermore, these bots are notoriously susceptible to spoofing and unauthorized entry. Malicious actors can use manipulated calendar invitations or misconfigured meeting links to insert unauthorized bots into private calls. Once inside, these digital observers can exfiltrate sensitive data without triggering traditional network or endpoint security alerts. Most standard security protocols are designed to monitor structured data flows and infrastructure anomalies, leaving them effectively blind to the semantic context of a meeting being recorded by a third-party bot.

Legal Realities: Navigating Privacy Laws and Consent

The use of AI notetakers places modern enterprises in a precarious legal position, particularly regarding privacy and consent statutes that vary significantly across different regions. Many jurisdictions operate under “all-party” or “two-party” consent laws, which mandate that every participant in a confidential communication must agree to being recorded. In states like California, Illinois, and Connecticut, the presence of an AI bot that begins recording without a formal announcement or explicit agreement from all parties can constitute a direct violation of the law. This creates a significant liability for the employer, as they are ultimately responsible for the actions of their employees and the tools they bring into the workspace. Legal teams are increasingly concerned that these unauthorized recordings could lead to a wave of privacy litigation and regulatory fines that far outweigh the productivity gains provided by the technology.

International data regulations, such as the General Data Protection Regulation in the European Union, introduce even more complex obligations regarding data minimization and lawful processing. Most AI notetaking vendors operate under standard terms of service that fail to meet these rigorous global standards, particularly concerning the transfer of data to third-country processors. A major point of contention is the “fine print” that often allows vendors to use captured corporate data to train and refine their own models. This means that a company’s proprietary strategy, unique research, or legal defense could eventually inform the outputs of a model used by a direct competitor. Furthermore, in the event of legal proceedings, these transcripts are fully discoverable. Courts have consistently ruled that data must be retained for evidentiary purposes, even if a user attempts to remove it from the platform, turning every recorded meeting into a potential liability.

Digital Trust: Deepfakes and the Social Engineering Frontier

Beyond the immediate concerns of data leakage, the presence of AI in virtual meetings is eroding the fundamental trust required for effective digital collaboration. As AI-generated content becomes more sophisticated and realistic, the risk of deepfake impersonation in professional settings is reaching a critical point. A malicious actor could potentially use the voice biometrics, tonality, and unique personality traits captured by an AI notetaker to create a convincing digital replica of a high-ranking executive. This opens the door to a new era of social engineering, where an AI-generated persona could join a call, participate in a complex discussion, and authorize fraudulent financial transactions or disclose additional corporate secrets. When the “human” on the other side of the screen can be perfectly mimicked by a bot that has studied their every word, the foundation of secure digital business interaction begins to crumble.

This erosion of trust is particularly dangerous during high-value scenarios such as mergers and acquisitions or sensitive regulatory negotiations. In these environments, even a minor breach of trust or a momentary lapse in identity verification can have massive financial and reputational consequences. The danger is not merely that data is being stolen, but that the very identity of the participants is being commodified. Organizations that rely on virtual communication must now contend with the possibility that their internal culture and decision-making processes are being mapped by external AI entities. As these tools continue to collect data on how executives think, speak, and make decisions, the potential for targeted, high-impact social engineering attacks grows exponentially. Protecting the “conversation layer” of a business has become just as important as protecting its physical and digital infrastructure.

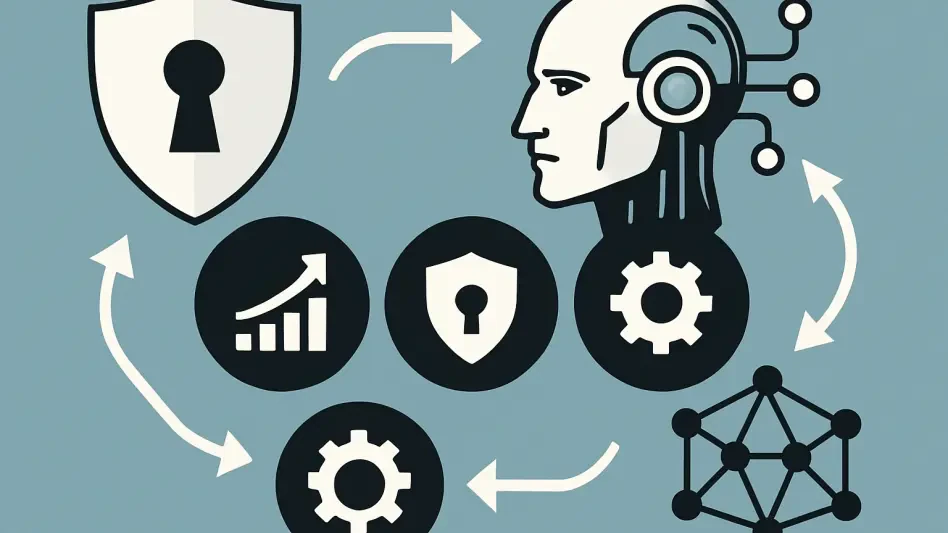

Strategic Evolution: Moving Beyond Traditional Defense

Standard cybersecurity architectures, which primarily consist of firewalls, endpoint detection, and data loss prevention software, are largely ineffective against the nuanced threats posed by AI notetakers. These traditional systems are built to monitor structured data and network traffic, making them essentially “blind” to the semantic meaning and contextual value of a live conversation. A standard security tool will recognize a legitimate connection to a cloud service but will fail to identify that the content being transmitted is a highly sensitive intellectual property discussion. This gap in visibility demonstrates that the threat model has shifted from protecting bits and bytes to protecting the actual intent and context of business communications. Consequently, a new approach to governance is required—one that treats AI interactions with the same level of scrutiny as privileged administrative access.

To effectively manage these risks, enterprises should transition from reactive banning toward a proactive, dedicated AI context security protocol. Banning these tools is often counterproductive, as employees frequently find ways to use them “under the radar” to maintain their productivity levels. Instead, organizations must implement rigorous auditing of OAuth permissions and mandate formal Data Processing Agreements that explicitly forbid the use of corporate data for model training. The ultimate objective should be achieving data sovereignty by utilizing on-premises or private cloud deployments for AI tools. This ensures that sensitive information remains within the enterprise’s internal infrastructure and never touches an unvetted third-party server. By implementing inline, behavioral oversight of all AI interactions, companies can recognize and protect the contextual value of their information in real-time, ensuring that the assistant invited to the meeting does not become the company’s greatest liability.

Protecting Corporate Assets: A New Strategic Framework

The analysis of AI notetakers revealed that the traditional boundary of the enterprise has been permanently altered by the introduction of automated observers. To counter these emerging threats, forward-thinking organizations moved toward a model of contextual governance where every AI interaction was treated as a potential data egress point. Security leaders prioritized the implementation of private AI instances, ensuring that transcription and summarization tasks occurred within a controlled environment that prevented data leakage to external models. They also established strict protocols for meeting attendance, where AI bots were required to undergo a verification process similar to that of a human participant. This shift allowed businesses to reap the benefits of AI-driven productivity without sacrificing the integrity of their most valuable intellectual property or compromising the privacy of their employees and clients.

In the final assessment, the most successful companies were those that recognized the hidden costs associated with “free” and “convenient” productivity tools. They moved away from the “productivity at all costs” mentality and instead adopted a framework that valued data sovereignty and conversational privacy. By auditing all third-party integrations and enforcing rigorous data processing agreements, these organizations reclaimed control over their digital environment. The legal and security teams worked in tandem to ensure that every recorded word was accounted for and protected under regional compliance standards. Ultimately, the transition to a more secure AI operating model proved that the best way to protect the value of ideas was to treat the conversation itself as a critical asset. This proactive stance ensured that while AI remained in the room to assist, the company’s strategic advantages and trade secrets remained exclusively its own.