The contemporary digital landscape is being rapidly transformed by autonomous AI agents that possess the unprecedented capability to manage intricate file systems and execute complex code autonomously. This modern AI agent offers a seductive promise: a digital companion that can manage files, browse the web, and execute code with the same authority as a human user. However, this deep integration creates a dangerous paradox where the very tools designed to boost efficiency require the keys to an entire digital life.

The convenience of an autonomous assistant comes with a significant threat to system integrity. As these agents transition from cloud-based chatbots to local system orchestrators, the line between a helpful tool and a high-level security risk becomes dangerously thin. This evolution demands a critical evaluation of whether the productivity gains are worth the potential for a complete compromise of personal and professional data.

The Faustian Bargain: Autonomous Productivity vs. System Security

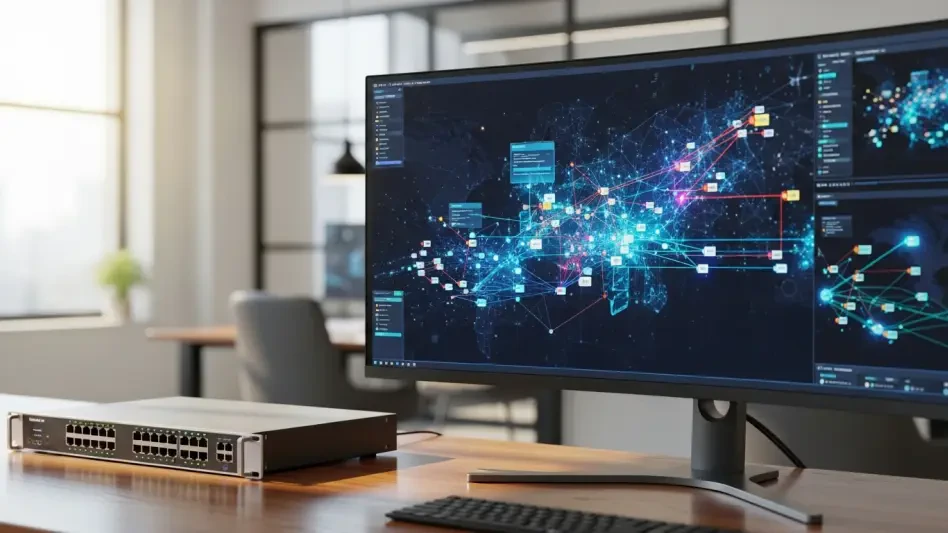

The rapid adoption of platforms like OpenClaw marks a significant shift in how users interact with artificial intelligence in the current year of 2026. Unlike older sandboxed environments, these modern gateways operate with direct access to local machines and browser sessions to perform complex tasks. This evolution has outpaced the development of necessary security protocols, leaving a massive gap that attackers are already looking to exploit.

As developers and enterprise users increasingly integrate these agents into daily workflows, understanding the underlying fragility of this ecosystem is no longer optional. The integration of AI into the root levels of an operating system means that a single vulnerability can grant an attacker total control. This transition represents a fundamental change in the threat model for personal computing, as the agent essentially becomes a high-privileged user that can be manipulated by external inputs.

Modern Architectures: Why the Current Architecture of AI Gateways Matters Now

The most pressing issue in the current landscape is the “plaintext problem,” where platforms store sensitive configuration data, API keys, and long-term memory in unencrypted files at predictable disk locations. This architecture turns a user machine into an open vault for “infostealer” malware, which can easily exfiltrate developer tokens and session logs. Because the agent requires these keys to function, they are often left exposed in the local environment, bypassing traditional cloud security measures.

Furthermore, the concept of “Agent Skills” introduces a new attack vector that many organizations have failed to anticipate. These markdown-based installers can be weaponized to deliver malware that raids SSH keys and cloud credentials upon execution. Once a malicious skill is added to an agent library, it can operate silently in the background, utilizing the agent’s broad permissions to harvest data without triggering traditional antivirus software.

Technical Fragility: Deconstructing the Vulnerabilities Within the Agent Ecosystem

Perhaps most chilling is the weaponization of an agent’s memory, where captured personal context and writing styles allow bad actors to craft hyper-sophisticated phishing campaigns. These logs contain a wealth of personal and professional context, providing the data necessary to perform digital impersonations that are nearly indistinguishable from the real user. The long-term storage of every interaction creates a goldmine for social engineering.

Security analysts point out that current AI agent implementations prioritize immediate functionality over essential safety constraints, creating a systemic risk that spans multiple platforms. Research indicates that because these agents operate under the user’s full authority, any compromise of the local machine grants an attacker immediate control over the entire history of the agent. This lack of isolation means that the AI’s “brain” is effectively shared with any malicious process on the system.

Professional Perspectives: Expert Insights on Systemic Risks and Industry Negligence

The industry consensus is shifting toward the realization that the open “Agent Skills” format is inherently flawed. Malicious scripts can be distributed across various ecosystems with little to no oversight, turning public hubs into potential delivery vehicles for sophisticated cyberattacks. This lack of a centralized, vetted marketplace for AI capabilities has allowed a “wild west” environment to flourish, where convenience is consistently prioritized over data safety.

Experts argue that the design of these gateways often ignores decades of established security principles. By granting an agent the same permissions as the logged-in user, developers have bypassed the principle of least privilege. This systemic negligence has created an environment where the AI agent is not just a tool, but a potential proxy for any attacker who can influence its prompts or access its local configuration files.

Necessary Defense: Strategic Safeguards for the Era of AI Agents

To mitigate these risks, organizations adopted a strict framework that began with banning AI agent tools on corporate devices or machines with access to production systems. A robust “trust layer” was proposed to mediate agent actions, ensuring that every agent operated under a unique identity rather than shared user permissions. These measures were essential to prevent the unauthorized escalation of privileges within sensitive networks.

Practical strategies included implementing the principle of least privilege, where agent permissions were specific, time-bound, and strictly governed by a brokered system. Transitioning from uncontrolled local access to a secure, mediated environment proved to be the only way to evolve these tools into safe, enterprise-ready assets. Security teams eventually moved toward a model where every AI action was logged, audited, and restricted to a dedicated virtual environment to ensure total isolation from the host OS.