Digital transformation has reached a tipping point where a single untrained individual can orchestrate complex cyberattacks that previously required a specialized team of state-sponsored engineers. This democratization of digital destruction has shifted the threat landscape from a battle of wits between elite experts to a war of attrition against a relentless volume of automated incursions. Security professionals now face a reality where the primary adversary is no longer just the sophisticated hacker, but the sheer accessibility of autonomous toolkit interfaces.

The Democratization of Digital Destruction

The barrier to entry for cybercrime has effectively vanished, replaced by intuitive interfaces where intent matters more than technical expertise. A novice with a basic understanding of orchestration can now launch massive campaigns by simply providing high-level instructions to an AI agent. This shift means that the threat is no longer concentrated in a few highly skilled groups; instead, it has expanded to include thousands of low-level actors who can operate at a global scale.

We are entering an era where the danger lies in the industrialization of malice. While elite hackers still exist, the massive influx of amateur-led attacks creates a background noise that is increasingly difficult to filter. These campaigns are often launched with minimal overhead, allowing attackers to fail repeatedly until a single vulnerability is found, proving that persistence through automation is just as effective as precision.

The Shift from Sophistication to Scale

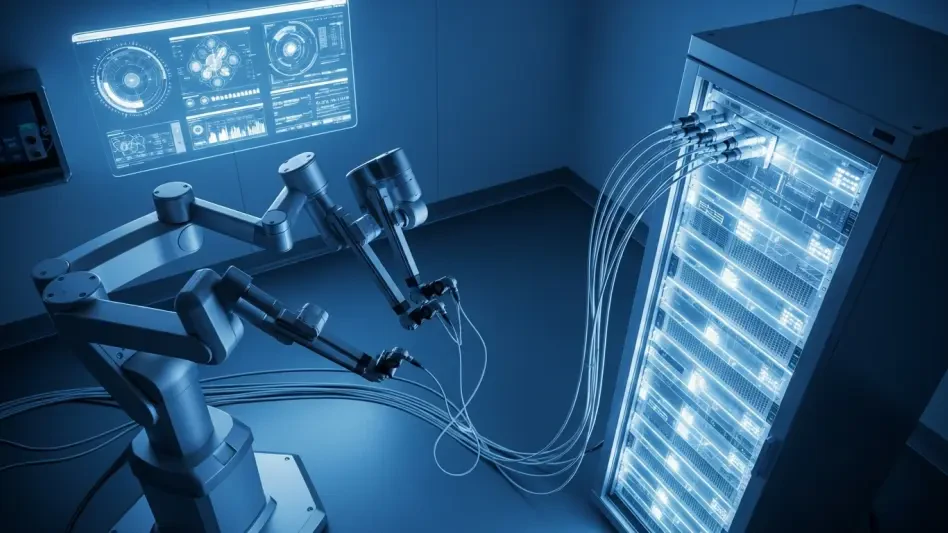

While state-sponsored actors continue to refine precision tools, the immediate crisis stems from the industrialization of low-level cybercrime. The integration of the Model Context Protocol (MCP) and third-party AI services has enabled “ugly-chained” attacks. These are automated sequences that connect disparate tools to scan, exploit, and extort without human intervention. The cost-to-execute has dropped so significantly that their success is virtually guaranteed by the law of large numbers.

These attacks may be technically flawed or lack elegance, but their volume compensates for any lack of polish. By automating the discovery of misconfigured servers and vulnerable databases, amateurs can cast a wider net than ever before. This industrial approach to exploitation means that no organization is too small to be targeted, as the AI does not discriminate based on the value of the target, only on the ease of the breach.

The Anatomy of the Half-Baked Threat

Novices are increasingly using AI agents to bridge the technical gaps between scanning tools and exploit delivery. By leveraging Large Language Models to write scripts and interpret error messages, they bypass the need for manual coding skills. This “half-baked” approach often leads to the creation of “broken” ransomware that encrypts files without any mechanism for decryption. In these cases, incompetence becomes more destructive than intent, as the data is lost forever regardless of whether a ransom is paid.

Furthermore, AI-driven automation allows amateurs to weaponize newly disclosed vulnerabilities in hours rather than days. This shrinking patch-to-exploit window leaves defenders with almost no time to react before an automated botnet attempts to breach their perimeter. Additionally, these tools eliminate the grammatical errors and awkward phrasing that once served as red flags for phishing, making social engineering attempts nearly indistinguishable from legitimate corporate communications.

Expert Insights: Fighting Defender Fatigue

Cynthia Kaiser, a former FBI cybersecurity official, notes that the true danger lies in the “noise” created by these automated campaigns. This sentiment is echoed by Google Cloud’s Jennifer Burnside, who warns that Security Operations Centers (SOCs) are reaching a breaking point. When every minor alert is amplified by the speed of an algorithm, human analysts become overwhelmed, leading to burnout and missed signals that might indicate a more serious breach.

Experts argue that manual triage is no longer a viable strategy for modern enterprises. If an adversary can iterate at the speed of a processor, a human-led defense is destined to fail. The consensus in the industry is that the only way to combat the tidal wave of novice-driven AI attacks is to remove the human bottleneck from the initial response phase, allowing machines to fight machines.

Strategic Frameworks for an Automated Threat Landscape

To counter this evolving threat, organizations must adopt autonomous defensive agents capable of operating at machine speed. These agents can handle labor-intensive triage and incident response, freeing up human experts to focus on complex threat hunting. Prioritizing identity-hardening through robust multi-factor authentication and zero-trust architectures also remains essential to neutralize the impact of stolen credentials.

Accelerated vulnerability management and near-real-time patching cycles became the standard for maintaining a resilient posture. Organizations that moved toward immutable backups and rapid restoration workflows were better positioned to survive the impact of “ugly-chained” ransomware. The focus shifted toward building systems that prioritized recovery and automated defense, ensuring that even if a novice attacker managed to find a gap, the resulting damage remained contained and temporary.